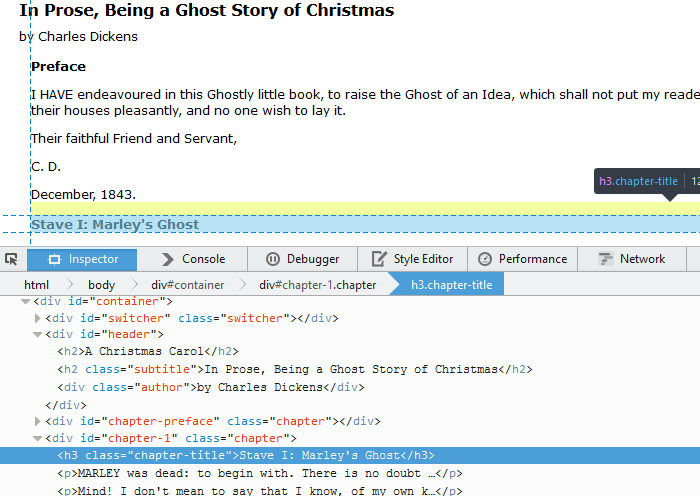

In order to track down a bug, I needed to log HTTP requests sent from one of our web services to another third party web service. (We hosted the service, but the software was not developed in house).

Our web service was written in resteasy, a framework I was not especially familiar with. (I prefer to use the Spring stack, and always create new web services using Spring Boot). The code to call the third party web service looked like this

import javax.ws.rs.client.Invocation.Builder;

builder.buildPost(Entity.form(form)).invoke();

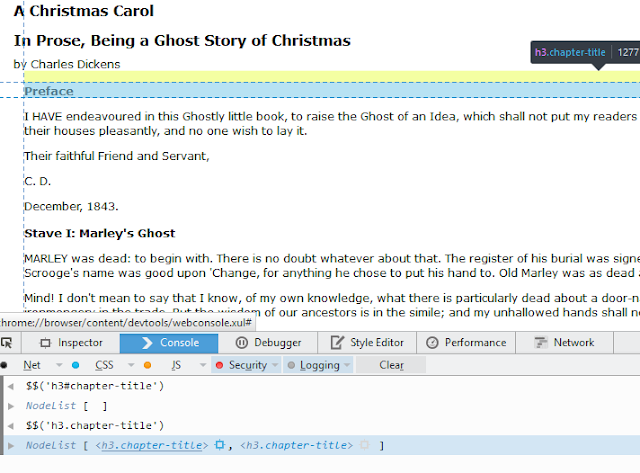

Surprisingly, there wasn’t an obvious way to get the request body sent. From various stackoverflow Q&A, the way to log JAX-RS outbound client requests was to create an implementation of ClientRequestFilter, and register it as a Provider in the container.

@Provider

public class MyClientRequestLoggingFilter implements ClientRequestFilter {

private static final Logger LOG = LoggerFactory.getLogger(MyClientRequestLoggingFilter.class);

@Override

public void filter(ClientRequestContext requestContext) throws IOException {

LOG.info(requestContext.getEntity().toString());

}

}

You then configure your web.xml to scan for providers

<context-param>

<param-name>resteasy.scan.providers</param-name>

<param-value>true</param-value>

</context-param>

There are quite a few warnings that because the function ClientRequestContext.getEntity() returns an Object, the default toString() may not work as expected. Unmarshalling of the object is required to log the request body.

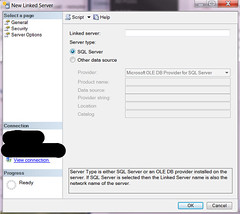

After banging my head against a wall for an afternoon, I decided to take a completely different approach to the problem. I googled on how to enable request logging in apache httpd instead. This turned out to be a much more straightforward way to achieve what I needed. The module mod_dumpio can used to dump all input and output requests to the server into a log file. You need mod_dumpio present in the apache httpd installation. (In windows, check to see if mod_dumpio.so is in c:\apache-install-dir\modules). Stop the service, edit the httpd.conf file to include the following lines

LoadModule dumpio_module modules/mod_dumpio.so

ErrorLog "logs/error.log"

LogLevel debug

DumpIOInput On

DumpIOOutput On

LogLevel dumpio:trace7

The ErrorLog and LogLevel lines are already present in my httpd.conf. I changed the LogLevel to debug, and added the follwoing three lines to turn on the dumpio module. After server restart, all HTTP requests and responses were successfully logged to the file logs/error.log.

Lesson learnt here – if an approach turned out to be more complicated than expected, it’s worth taking a step back and rethink.